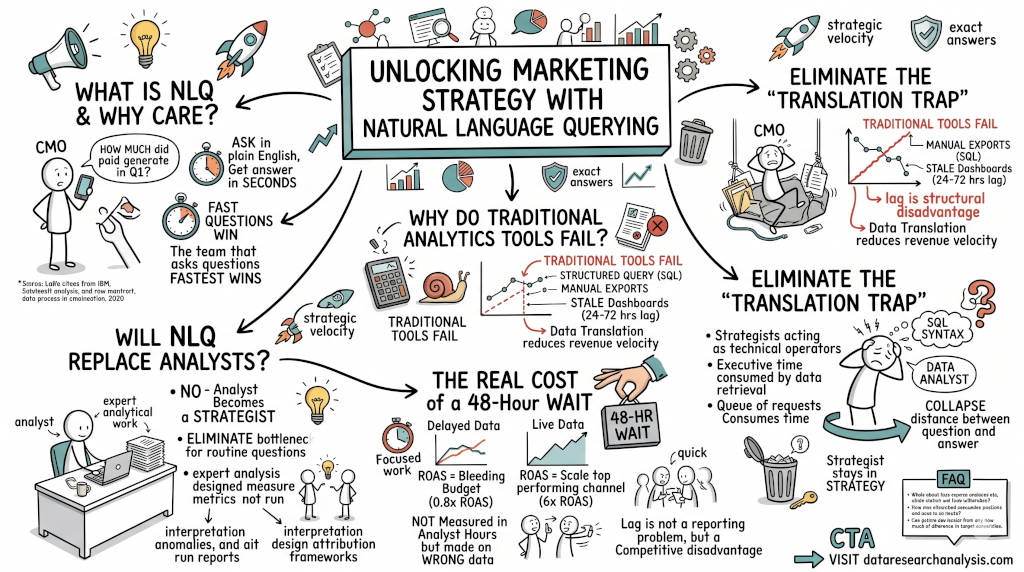

Summary: Marketing teams lose 400 hours a year translating data instead of acting on it. Natural language querying ends that. Ask your data a question in plain English. Get an answer in seconds. No SQL. No analyst ticket. No 48-hour lag. The team that stops translating and starts deciding wins.

1. What Is Natural Language Querying and Why Should Marketers Care?

The Answer: Natural language querying lets you ask your data a question in plain English and receive an answer in seconds. No SQL. No analyst ticket. No 48-hour wait. For marketers, this is not a convenience feature. It is the difference between a decision made on live data and one made on a three-day-old report. The team that asks questions fastest wins.

The Language of Data Has Always Excluded the People Who Need It Most

Marketing leaders run multi-million dollar budgets. They own campaign strategy, attribution models, and board-level reporting. Yet the one question that drives every decision — "What is actually working?" — has historically required a technical middleman to answer.

SQL is the language of databases. It is not the language of strategy.

A CMO should not need to write SELECT SUM(revenue) FROM campaigns WHERE channel = 'paid' AND date > '2025-01-01' to answer "How much did our Q1 paid campaigns generate?" That question should be asked in English and answered in under five seconds.

Natural language querying removes the technical barrier entirely. IBM defines NLP as a subfield of AI that "enables computers to understand and communicate with human language" — meaning the machine now speaks your language, not the other way around.

Source: IBM Think — What is natural language processing? https://www.ibm.com/think/topics/natural-language-processing

The marketing office that still routes every data question through an analyst queue is not running a strategy operation. It is running a translation service.

2. Why Do Traditional Analytics Tools Fail Modern Marketing Teams?

The Answer: Traditional analytics tools were built for data engineers, not decision-makers. They require structured query knowledge, manual export workflows, and static dashboards that lag 24 to 72 hours behind real campaign performance. By the time a CMO reads a report, the market has already moved. The tool did not fail to produce data. It failed to produce it at the speed of a decision.

The Speed Problem Is a Revenue Problem

Marketing decisions have a shelf life. A campaign performing at 4x ROAS on Tuesday does not perform identically on Friday. Budget reallocation that waits for a Thursday report is allocating Tuesday's insight to Friday's reality.

Gartner documented this structural problem directly. Their research, cited by IBM, confirms that legacy reporting infrastructure creates a lag that costs organizations real revenue velocity. Conversational AI deployments that remove this lag are projected to reduce enterprise labor costs tied to data translation by USD 80 billion by 2026.

Source: Gartner, via IBM Think — What is conversational analytics? https://www.ibm.com/think/topics/conversational-analytics. Original Gartner release: https://www.gartner.com/en/newsroom/press-releases/2022-08-31-gartner-predicts-conversational-ai-will-reduce-contac

The Salesforce State of Marketing (10th Edition) surveyed 4,500 marketing leaders worldwide. The finding is direct: 83% of marketers recognize the urgent need for data-driven, real-time personalization. Only 1 in 4 are satisfied with how they actually use data to power those decisions.

Source: Salesforce State of Marketing, 10th Edition https://www.salesforce.com/resources/research-reports/state-of-marketing/

That gap — 83% recognizing the need, 25% executing it — is not a strategy problem. It is an infrastructure problem. The tools do not match the demand.

Traditional platforms ask marketers to adapt to the machine. Natural language querying inverts the relationship. The machine adapts to the marketer.

3. How Does Natural Language Querying Eliminate the Translation Trap?

The Answer: The Translation Trap is the hidden cost of having strategists act as technical operators. Every time a CMO files a ticket to pull a report, executive time is being consumed by data retrieval. Natural language querying collapses the distance between the question and the answer. The strategist stays in strategy. The data responds on demand.

The Trap Has a Measurable Price Tag

Marketing teams spend an estimated 400 hours per year on manual data maintenance and report preparation. That is ten full work weeks per team member — not producing campaigns, not analyzing performance, not refining strategy. Translating data between systems and formats.

Natural language querying does not just speed up the query. It eliminates the query queue entirely.

Here is the operational shift:

Before NLQ: CMO identifies a performance question. CMO opens a ticket. Analyst builds the query. Analyst exports the data. Analyst formats the report. CMO receives the answer 48 hours later and acts on outdated intelligence.

After NLQ: CMO types the question in plain English. The system converts it to SQL, runs the query, and returns an answer in under five seconds. No ticket. No lag. No translation cost.

IBM's conversational analytics research documents the enterprise-level impact: organizations that deploy natural language interfaces across analytics workflows see material reductions in analyst labor overhead and significant gains in decision velocity.

Source: IBM Think — What is conversational analytics? https://www.ibm.com/think/topics/conversational-analytics

The marketing office of 2026 cannot afford to route every strategic question through a technical queue. The competitor who asks the question and gets the answer in real time wins the budget reallocation, the pivot, and the quarter.

4. What Is the Real Cost of Waiting 48 Hours for a Data Answer?

The Answer: The cost is not measured in analyst hours. It is measured in decisions made on wrong data. A campaign bleeding budget at 0.8x ROAS for 48 hours while waiting for a report costs real money. A channel delivering 6x ROAS that does not receive additional budget because the signal came in too late costs real revenue. Lag is not a reporting problem. It is a competitive disadvantage priced into every campaign cycle.

Delayed Data Is the Same as No Data

Consider this scenario: Your team runs five paid channels simultaneously. Google, Meta, LinkedIn, programmatic, and email. One channel is underperforming. One is breaking records. You will not know which is which until Thursday's report. It is Tuesday.

Every hour of budget spent on the underperformer between now and Thursday is waste the report will explain after the fact.

Natural language querying makes this scenario obsolete. The question "Which of our active channels has the lowest ROAS this week?" returns a live answer in seconds. The follow-up question "What would our total revenue look like if we reallocated that budget to our top-performing channel?" returns a modeled projection immediately after.

The marketer who asks those questions at 9:00 AM on Tuesday and acts by 10:00 AM has a structural advantage over every competitor waiting for a Thursday report.

IBM research on NLP confirms this: "NLP enhances data analysis by enabling the extraction of insights from text data... allowing businesses to better understand market conditions and make data-driven decisions more efficiently."

Source: IBM Think — What is natural language processing? https://www.ibm.com/think/topics/natural-language-processing

The insight was always in the data. The barrier was always the tool.

5. Will Natural Language Querying Replace SQL Analysts in Marketing?

The Answer: No. Natural language querying does not replace analysts. It eliminates the analyst bottleneck for routine strategic questions. The analyst's value is not in running reports. It is in interpreting anomalies, building attribution frameworks, and designing measurement infrastructure. NLQ frees analysts to do work that actually requires human expertise. It removes the queue of simple questions that consumes most of their time.

The Analyst Becomes a Strategist

Today's marketing analyst spends a significant portion of their week pulling the same reports in different formats. Weekly channel performance. Campaign attribution by touchpoint. Budget pacing by region. These are mechanical tasks. They require no judgment. They require SQL syntax and a scheduled export.

Natural language querying absorbs all of that workload. The analyst is freed.

The questions that remain — "Why did our attribution model shift after the GA4 migration?" or "Is our incrementality measurement accounting for view-through conversions?" — are questions that require domain expertise. Those questions cannot be replaced by a natural language interface. They are the reason you hired an analyst in the first place.

The marketing office of the future is not analyst-free. It is analyst-focused. The routine data retrieval work moves to NLQ. The expert analytical work moves to the analysts who can now actually do it.

Salesforce's research confirms the direction: the gap between data recognition and data execution is not a skills shortage. It is a tooling barrier. Equip the team correctly and the execution follows.

Source: Salesforce State of Marketing, 10th Edition https://www.salesforce.com/resources/research-reports/state-of-marketing/

FAQ

Q: Does natural language querying work with data that is already in multiple systems?

A: Yes, if the underlying platform supports federated queries. The query layer must be able to join data across sources — GA4, SQL databases, ad platforms — without moving it into a single warehouse first. Without federated architecture, NLQ only reads a fraction of your actual marketing data.

Q: How accurate is AI-generated SQL from a plain English question?

A: Accuracy depends entirely on the model and the schema awareness underneath it. Modern AI data modelers powered by large language models like Gemini 2.0 achieve high accuracy on well-structured schemas. The key is schema inference — the model must understand how your data is connected before it can translate your question correctly.

Q: What happens when the natural language query produces the wrong answer?

A: In a well-architected system, queries are transparent. The generated SQL is visible and auditable. You can verify what the system asked the database, not just what it returned. Black-box NLQ is a risk. Transparent, inspectable NLQ is a standard.

Q: Can a CMO with no technical background trust a natural language query result for a board presentation?

A: Trust depends on the data infrastructure, not the interface. If the underlying data is clean, deduped, and sourced from a verified pipeline, a natural language result is as reliable as any analyst-built report. The question to ask is: does the system show you the query it ran, the data source it pulled from, and the time the data was last refreshed?

Q: Is natural language querying only for large enterprises with big data teams?

A: No. The business case is strongest for mid-market teams with no dedicated data engineering resources. These teams have the most to gain from removing the technical translation layer. Enterprise teams with mature data functions use NLQ differently — to extend self-service access to more team members, not to replace existing infrastructure.

Q: How is this different from asking ChatGPT about my marketing data?

A: ChatGPT responds from general training data. It has no access to your actual campaign numbers, attribution models, or pipeline metrics. A purpose-built NLQ layer connects to your live data environment and queries it directly. The answer reflects your real numbers, not a generalized estimate.

CTA

If your team is still filing tickets to answer basic campaign questions, book a session with DRA and see what real-time natural language querying looks like against your actual data.

Visit dataresearchanalysis.com

References

IBM Think. "What is NLP (natural language processing)?" IBM Corporation, 2024. https://www.ibm.com/think/topics/natural-language-processing

IBM Think. "What is conversational analytics?" IBM Corporation, 2024. https://www.ibm.com/think/topics/conversational-analytics

Gartner. "Gartner Predicts Conversational AI Will Reduce Contact Center Agent Labor Costs by USD 80 Billion in 2026." Gartner Newsroom, August 31, 2022. https://www.gartner.com/en/newsroom/press-releases/2022-08-31-gartner-predicts-conversational-ai-will-reduce-contac

Salesforce. "State of Marketing, 10th Edition." Salesforce Research, 2024. Survey of 4,500 marketing leaders worldwide. https://www.salesforce.com/resources/research-reports/state-of-marketing/

Data Research Analysis. "The Report Lag: Why You Are Making Decisions on 48-Hour-Old Data." https://www.dataresearchanalysis.com/articles/the-report-lag-why-you-are-making-decisions-on-48-hour-old-data

Data Research Analysis. "Why Your Marketing Reports Take 3 Days to Build." https://www.dataresearchanalysis.com/articles/why-your-marketing-reports-take-3-days-to-build

Data Research Analysis. "The Invisible Drain: Is Your Marketing Team Losing 400 Hours a Year to Data Drudgery?" https://www.dataresearchanalysis.com/articles/the-invisible-drain-is-your-marketing-team-losing-400-hours-a-year-to-data-drudgery

Data Research Analysis. "The Hidden Tax on Manual Reporting: Why Your Spreadsheets Are Costing You More Than You Think." https://www.dataresearchanalysis.com/articles/the-hidden-tax-on-manual-reporting-why-your-spreadsheets-are-costing-you-more-than-you-think